Instance Deformable Metal Splatter

CodeOur ongoing project on instance aware dynamic 3D gaussian splatting on Apple devices, based on my friend's (Li et al.) paper TRASE [3DV 2026]

This joint project with Yun-Jin is an extended fork of Metal Splatter which allows to render 3D Gaussian Splats on Apple Devices with Metal support.

Deformable 3DGS

The core of this project builds on Deformable 3D Gaussians which extends 3D Gaussian Splatting to dynamic scenes. The key idea is to learn a canonical set of Gaussians together with a deformation network that predicts per-Gaussian position, rotation, and scale deltas conditioned on each Gaussian’s canonical 3D position and a time parameter t. At each timestep, the deformation network transforms the canonical Gaussians into their deformed state, enabling the reconstruction of dynamic scenes from monocular video.

The deformation network is an MLP with 8 layers of width 256, using sinusoidal positional encoding on both the xyz input (10 frequency bands) and the time input (10 frequency bands), with a skip connection at layer 4. Three output heads predict the position delta (d_xyz), rotation delta (d_rotation as a quaternion), and scale delta (d_scale), which are applied additively to the canonical Gaussian parameters.

In our implementation, we port this deformation network to run entirely on-device using Apple’s MetalPerformanceShadersGraph (MPSGraph) framework. The network weights are exported from the PyTorch model into a flat binary file and loaded as MPSGraph constants. The full computation graph, including the positional encoding, dense layers, and output heads, is reconstructed and pre-compiled into an MPSGraphExecutable for efficient GPU inference. Custom Metal compute shaders handle extracting the canonical Gaussian parameters as MLP inputs and applying the predicted deltas back onto the Gaussians, including computing the 3D covariance matrix from the deformed quaternion and scale for rendering. The MLP runs in batches of 16384 splats to manage GPU memory. This allows real-time playback of dynamic Gaussian Splat scenes on iPhones, iPads, and Macs.

FP16 Support and Cluster Visualisation

We later added support for instance-aware gaussian splats by integrating the TRASE cluster support which enables cluster visualisation and cluster-based selection of gaussian splats. Additionally, the deformation network is accelerated via optional FP16 support yielding about 2x faster inference speed. Integrating the clustering workflow can allow for interactive selection of regions based on the TRASE clusters as visualised below:

MobileCLIP Text Querying

Inspired by the functionality of the TRASE gui, we added support for text querying of the gaussian splats using MobileCLIP. In the TRASE gui Grounded SAM is run on the rendered image to detect objects of interest independently of the TRASE clusters, which are then subsequently merged based on iou overlap of the reprojected splats and the detected TRASE objects. While this workflow is ideal on a GPU machine, it cannot run in real time on a mobile processor, which leads us to implement MobileCLIP for text querying of the gaussian splats. To do so, we extract the per-cluster bounding box from the rendered image and run MobileCLIP on the cropped images to extract the image features for each cluster separately. To improve robustness, we further mask out regions in the bounding box crop that do not belong to the instance.

We note that some noise is introduced when rendering the splats into the image which can lead to worse performance of MobileCLIP when compared to normal images. We also noticed that our rendering quality was worse than expected which further impacted the performance of MobileCLIP and led us to improve the rendering quality of the framework by investigating potential bugs in the implementation (as described in the next section).

We visualise examples of the real-time cluster encoding and text querying on MacBook M5 Pro and iPhone 17 Air below:

Improved Rendering Quality

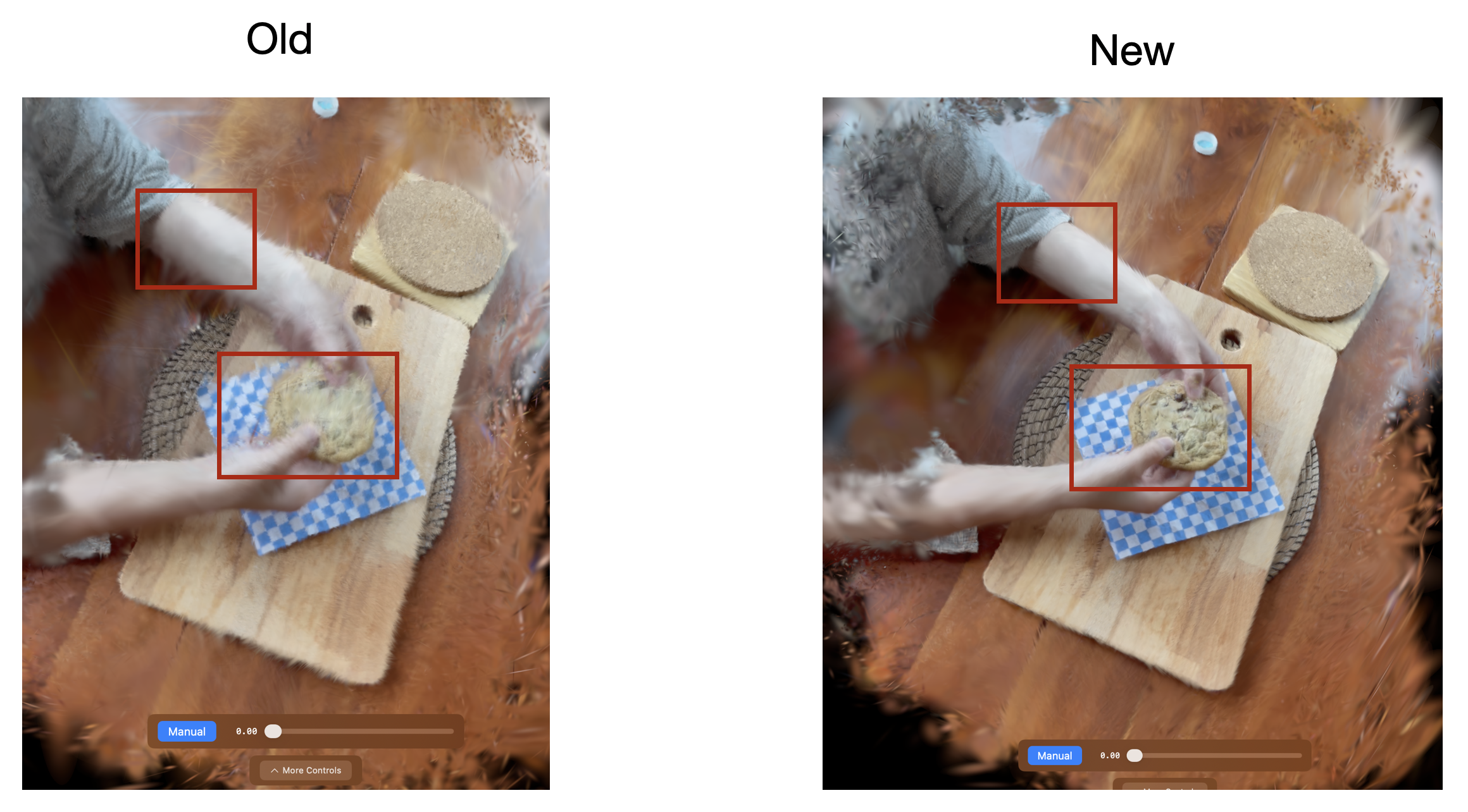

When implementing extensions to the framework, we noticed a difference in rendering quality in the ply files when running the deformation network in PyTorch (and visualising them in spark.js) and comparing it to the implementation in our app. Upon further investigation of the code, I found the rotation matrix to be implemented as R^T rather than R. This leads to an issue when computing the covariance sigma matrix as Sigma = R^T * S * R rather than Sigma = R * S * R^T. As Gaussians are not generally symmetric, this led to an issue when rendering the splats as the inverse rotation is applied. We further removed the np.exp() on the splat scale as this is already computed when loading the ply file, originally leading to double exponential scaling of the splats which introduces noisy artifacts.

We visualize the improved rendering quality in deformation mode below:

Optional Runtime Speedup by selectively running the deformation network on a subset of Splats

Inspired by ideas such as in the paper “MAPo: Motion-Aware Partitioning of Deformable 3D Gaussian Splatting for High-Fidelity Dynamic Scene Reconstruction”, we implemented a primitive region separation into static and dynamic regions by pre-calculating the per splat motion vectors using the deformation network and then comparing the per step displacement of the splats. For each scene we compute the per splat motion vectors and allow the user to set a threshold in the app interactively to trade off between speedup and quality. This approach achieves a significant speedup of over 5x as it avoids running the deformation network on static regions of the scene.

Please note the “mask static” button click and the runtime fps of the deformation network in the top right corner of the videos below:

Feel free to give us a star and add issues for feature requests or bug reports :)

Please also see the TRASE paper for more details on the instance-aware deformable 3D gaussian splatting and the TRASE repo.

References

-

Yun-Jin Li, Mariia Gladkova, Yan Xia, and Daniel Cremers. TRASE: Tracking-free 4D Segmentation and Editing. In Proceedings of the International Conference on 3D Vision (3DV), 2026.

-

Ziyi Yang, Xinyu Gao, Wen Zhou, Shaohui Jiao, Yuqing Zhang, and Xiaogang Jin. Deformable 3d gaussians for highfidelity monocular dynamic scene reconstruction. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 2024.

-

Tianhe Ren, Shilong Liu, Ailing Zeng, Jing Lin, Kunchang Li, He Cao, Jiayu Chen, Xinyu Huang, Yi Chen, Feng Yan, Zhaoyang Zeng, Hao Zhang, Feng Li, Jie Yang, Hongyang Li, Qing Jiang, and Lei Zhang. Grounded SAM: Assembling Open-World Models for Diverse Visual Tasks. arXiv preprint arXiv:2401.14159, 2024.

-

Pavan Kumar Anasosalu Vasu, Hadi Pouransari, Fartash Faghri, Raviteja Vemulapalli, and Oncel Tuzel. MobileCLIP: Fast Image-Text Models through Multi-Modal Reinforced Training. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024.

-

Fartash Faghri, Pavan Kumar Anasosalu Vasu, Cem Koc, Vaishaal Shankar, Alexander Toshev, Oncel Tuzel, and Hadi Pouransari. MobileCLIP2: Improving Multi-Modal Reinforced Training. Transactions on Machine Learning Research, 2025.

-

MAPo: Motion-Aware Partitioning of Deformable 3D Gaussian Splatting for High-Fidelity Dynamic Scene Reconstruction.

-

spark.js: An advanced 3D Gaussian Splatting renderer designed for use with Three.js. https://github.com/sparkjsdev/spark

-

MetalSplatter: Rendering 3D Gaussian Splats on Apple Devices with Metal. https://github.com/scier/MetalSplatter